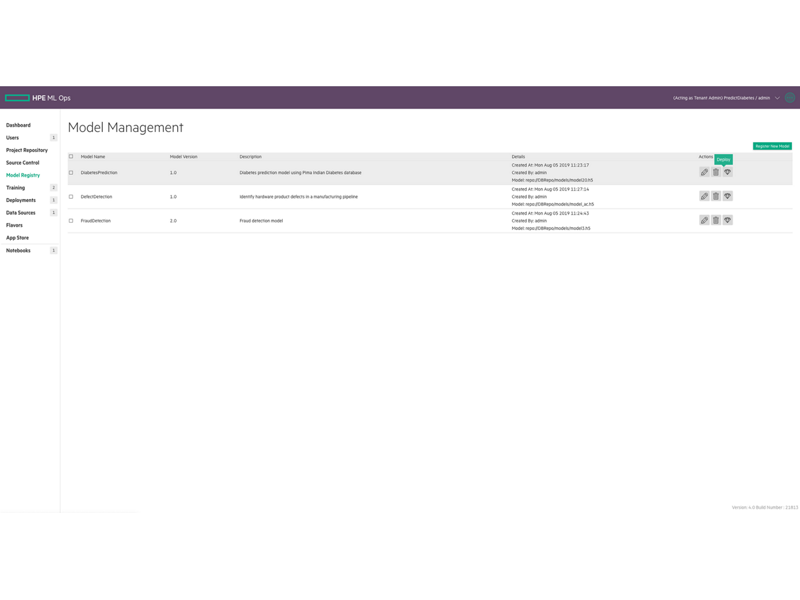

Deploy a Model 5.1- HPE Ezmeral ML Ops

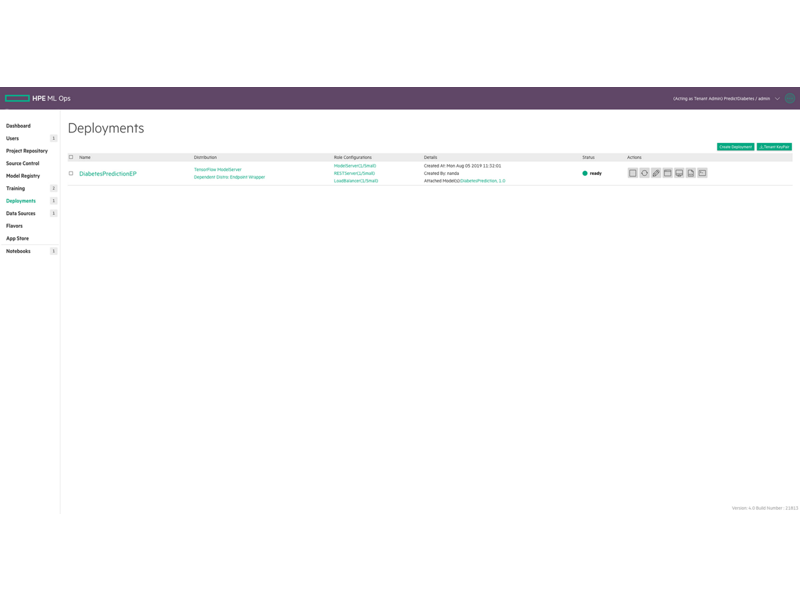

Build and Train a Model 5.1- HPE Ezmeral ML Ops

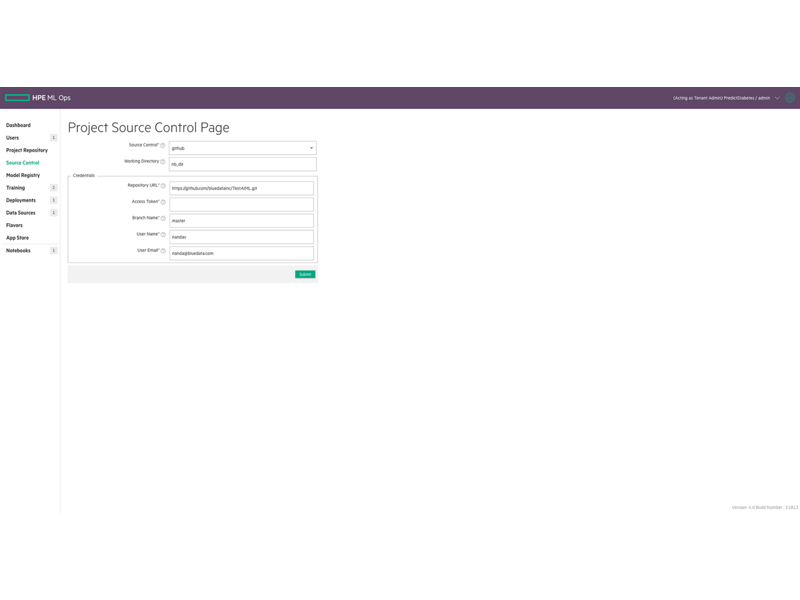

Set Up a Project Repository 5.1- HPE Ezmeral ML Ops

HPE Ezmeral Machine Learning Ops

Much like pre-DevOps software development, data science organizations still spend a significant amount of time and effort when moving projects from development to production. Model version control and code sharing is manual, and there is a lack of standardization on tools and frameworks, making it tedious and time-consuming to productize machine learning models.

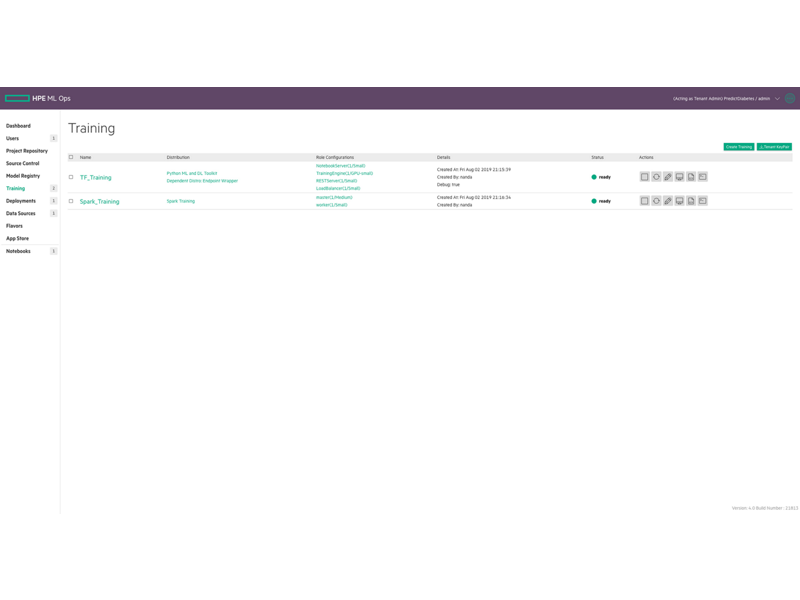

HPE Ezmeral Machine Learning Ops (HPE Ezmeral ML Ops) extends the capabilities of the HPE Ezmeral Runtime Enterprise and brings DevOps-like agility to enterprise machine learning. With the HPE Ezmeral ML Ops, enterprises can implement DevOps processes to standardize their ML workflows.

HPE Ezmeral ML Ops provides data science teams with a platform for their end-to-end data science needs with the flexibility to run their machine learning or deep learning (DL) workloads on-premises, in multiple public clouds, or a hybrid model and respond to dynamic business requirements in a variety of use cases.

Contact us

Chat with usMaximize your HPE Ezmeral Machine Learning Ops

What's New

- KubeFlow 1.3 (security hardened)? and Model Monitoring

- Spark Operator Add-on, Spark History Server, Spark Thrift Server, Apache Livy and Hive Metastore

- Delta Lake support

- Spark versions supported: Apache Spark 2.4.7 and Apache Spark 3.1.2

- HPE Ezmeral Runtime Analytics for Apache Spark

- Introducing HPE Ezmeral Runtime Enterprise New UI with ?Enhanced UX: improved UX for data scientists?; Packaged libraries for simplified coding experience and Notebook magics for KubeFlow

Manage and provision infrastructure through an intuitive graphical user interface.

Show more

Kubernetes ® is a registered trademark of the Linux Foundation in the United States and other countries, and is used pursuant to a license from the Linux Foundation. LINUX FOUNDATION and YOCTO PROJECT are registered trademarks of the Linux Foundation.

Find what you are looking for?

Need help locating the right product for your business?

*All pricing displayed is indicative; the reseller sets the final transactional price and may include other fees such as sales tax/VAT and shipping. The transactional price set by the reseller may vary from other resellers and the indicative price displayed. Indicative pricing may include limited-time promotional offers. HPE reserves the right to make pricing adjustments at any time for reasons including, but not limited to, changing market conditions, product discontinuation, restricted product availability, promotion end of life, and errors in advertisements.